‘Web services’ or ‘software as a service (SaaS)’ refers to a highly significant model for managing software and data within the e-business age. The web services model involves managing and performing all types of business processes and activities through accessing web-based services rather than running a traditional executable application on the processor of your local computer.

1. Benefits of web services or SaaS

SaaS are usually paid for on a subscription basis, so can potentially be switched on and off or payments paid according to usage, hence they are also known as ‘on demand’. The main business benefit of these systems is that installation and maintenance costs such as upgrades are effectively outsourced. Cost savings are made on both the server and client sides, since the server software and databases are hosted externally and client applications software is usually delivered through a web browser or a simple application that is downloaded via the web.

In research conducted in the US and Canada by Computer Economics (2006), 91% of companies showed a first-year return on investment (ROI) from SaaS. Of these, 57% of the total had economic benefits which exceeded the SaaS costs and 37% broke even in year one. The same survey showed that in 80% of cases, the total cost of ownership (TCO) came in either on budget or lower. There would be few cases of traditional applications where these figures can be equalled.

2. Challenges of deploying SaaS

Although the cost reduction arguments of SaaS are persuasive, what are the disadvantages of this approach? The pros and cons are similar to the ‘make or buy’ decision discussed in Chapter 12. SaaS will obviously have less capability for tailoring to exact business needs than a bespoke system.

The most obvious disadvantage of using SaaS is dependence on a third party to deliver services over the web, which has these potential problems:

- Downtime or poor availability if the network connection or server hosting the application or server fails.

- Lower performance than a local database. You know from using Gmail or Hotmail that although responsive, they cannot be as responsive as using a local e-mail package like Outlook.

- Reduce data security since traditionally data would be backed up locally by in-house IT staff (ideally also off-site). Since failures in the system are inevitable, companies using SaaS need to be clear how backup and restores are managed and the support that is available for handling problems which is defined within the SLA.

- Data protection – since customer data may be stored in a different location it is essential that it is sufficiently secure consistent with the data protection and privacy laws discussed in Chapter 4.

You can see that there are several potential problems which need to be evaluated on a case- by-case basis when selecting SaaS providers. Disaster recovery procedures are particularly important since many SaaS applications such as customer relationship management and supply chain management are mission-critical. Managers need to question service levels since often services are delivered to multiple customers from a single server in a multi-tenancy arrangement rather than a single-tenancy arrangement. This is similar to the situation with the shared server or dedicated server we discussed earlier for web hosting. An example of this in practice is shown in Box 3.9.

An example of a consumer SaaS, word processing, would involve visiting a web site which hosts the application rather than running a word processor such as Microsoft Word on your local computer through starting ‘Word.exe’. The best-known consumer service for online word processing and spreadsheet use is Google Docs (http://docs.google.com) which was launched following the purchase in 2006 by Google of start-up Writely (www.writely.com). Google Docs also enables users to view and edit documents offline, through Google Gears, an open source browser extension. ‘Microsoft Office Live’ is a similar initiative from Microsoft.

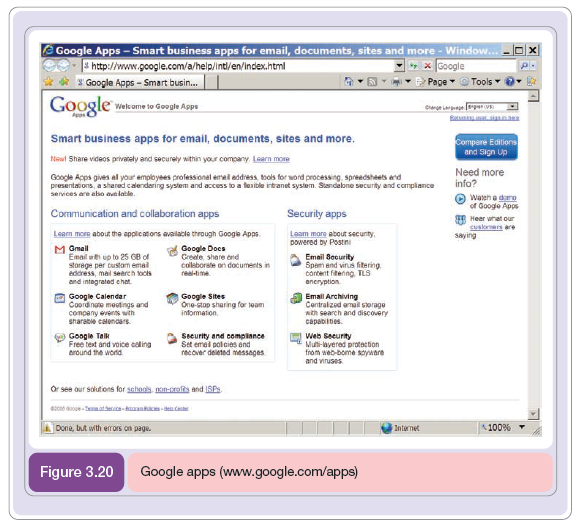

As an indication of the transformations possible through web services see Figure 3.20 which shows how Google’s mission to ‘manage the World’s information’ also applies to supporting organizational processes. Google Apps enables organizations to manage many of their activities. The basic service is free with the Premier Edition which includes more storage space and security being $50 per user account per year.

A related concept to web services is utility computing. Utility computing involves treating all aspects of IT as a commodity service such as water, gas or electricity where payment is according to usage. A subscription is usually charged per month according to the number of features, number of users, volume of data storage or bandwidth consumed. Discounts will be given for longer-term contracts. This includes not only software which may be used on a pay-per-use basis, but also using hardware, for example for hosting. An earlier term is ‘applications service providers’ (ASP) which is less widely used now.

Figure 3.21 shows one of the largest SaaS or utility providers Salesforce.com where customers pay from £5 to £50 per user per month according to the facilities used. The service is delivered from the Salesforce.com servers to over 50,000 customers in 15 local languages.

In descriptions of web services you may hear confusingly, that they access ‘the cloud’ or the term ‘cloud computing’, where the cloud referred to is the Internet (networks are often denoted as clouds on diagrams of network topology). So for example, if you are accessing your Google Docs then they will be stored somewhere ‘in the cloud’ without any knowledge of where it is or how it is managed since Google stores data on many servers. And of course you can access the document from any location. But there are issues to consider about data stored and served from the cloud: ‘is it secure, is it backed up, is it always available?’. The size of Google’s cloud is indicated by Pandia (2007) which estimated that Google has over 1 million servers running the open-source Linux software.

Think of examples of web services that you or businesses use, and you will soon see how important they are for both personal and business applications. Examples include:

- Web mail readers

- E-commerce account and purchasing management facilities such as Amazon.com

- Many services from Google such as Google Maps, GMail, Picasa and Google Analytics

- Customer relationship management applications from Salesforce.com and Siebel/Oracle

- Supply chain management solutions from SAP, Oracle and Covisint

- E-mail and web security management from companies like MessageLabs.

From the point of view of managing IT infrastructure these changes are dramatic since traditionally companies have employed their own information systems support staff to manage different types of business applications such as e-mail. A web service provider offers an alternative where the application is hosted remotely or off-site on a server operated by an ASP. Costs associated with upgrading and configuring new software on users’ client computers and servers are dramatically decreased.

2.1. Virtualization

Virtualization is another approach to managing IT resource more effectively. However, it is mainly deployed within an organization. VMware was one of the forerunners offering virtualization services which it explains as follows (VMware, 2008):

The VMware approach to virtualization inserts a thin layer of software directly on the computer hardware or on a host operating system. This software layer creates virtual machines and contains a virtual machine monitor or ‘hypervisor’ that allocates hardware resources dynamically and transparently so that multiple operating systems can run concurrently on a single physical computer without even knowing it.

However, virtualizing a single physical computer is just the beginning. VMware offers a robust virtualization platform that can scale across hundreds of interconnected physical computers and storage devices to form an entire virtual infrastructure.

They go on to explain that virtualization essentially lets one computer do the job of multiple computers, by sharing the resources of a single computer across multiple environments. Virtual servers and virtual desktops let you host multiple operating systems and multiple applications.

So virtualization has these benefits:

- Lower hardware costs through consolidation of servers (see mini case below)

- Lower maintenance and support costs

- Lower energy costs

- Scalability to add more resource more easily

- Standardized, peronalized desktops can be accessed from any location, so users are not tied to an individual physical computer

- Improved business continuity.

2.2. Service-oriented architecture (SOA)

The technical architecture used to build web services is formally known as a ‘service- oriented architecture’. This is an arrangement of software processes or agents which communicate with each other to deliver the business requirements.

The main role of a service within SOA is to provide functionality. This is provided by three characteristics:

- An interface with the service which is platform-independent (not dependent on a particular type of software or hardware). The interface is accessible through applications development approaches such as Microsoft .Net or Java and accessed through protocols such as SOAP (Simple Object Access Protocol) which is used for XML-formatted messages, i.e. instructions and returned results to be exchanged between services.

- The service can be dynamically located and invoked. One service can query for the existence of another service through a service directory – for example an e-commerce service could query for the existence of a credit card authorization service.

- The service is self-contained. That is, the service cannot be influenced by other services; rather it will return a required result to a request from another service, but will not change state. Within web services, messages and data are typically exchanged between services using XML.

The examples of web services we have given above all imply a user interacting with the web service. But with the correct business rules and models to follow, there is no need for human intervention and different applications and databases can communicate with each other in real time. A web service such as Kelkoo.com which was discussed in Chapter 2 exchanges information with all participating merchants through XML using an SOA. The concept of the semantic web mentioned above and business applications of web services such as CRM, SCM and ebXML are also based on an SOA approach. In another e-business application example provided by the World Wide Web Consortium at www.w3.org/TR/soap12-part0/, a company travel booking system uses SOAP to communicate with a travel company to book a holiday.

Read Case Study 3.2 to explore the significance and challenges of SOA further.

3. Case Study 3.2: New architecture or just new hype?

Depending on whom you listen to, it could be the most important shift in corporate computing since the advent of the Internet – or it could be just the latest excuse for technology companies to hype their products in a dismal market.

‘We believe it’s the Next Big Thing’, says Henning Kagermann, chairman of SAP, Europe’s biggest software company.

‘It’s the new fashion statement’, counters Mark Barrenechea, chief technology officer of Computer Associates. ‘I’m sceptical.’

The ‘it’ in question goes by the ungainly name of ‘service-oriented architecture’, or SOA for short. According to the big software companies, its impact on computing will be as big as the client-server revolution of the early 1990s, or the arrival of web-based applications with the internet.

‘Every five or 10 years, we see this in the industry’, says John Wookey, the executive in charge of Oracle’s Project Fusion, the giant effort to re-engineer all of the software applications inherited as a result of that company’s various acquisitions.

For those with ambitions to dominate the next phase of corporate software – SAP, Oracle, IBM and Microsoft – it represents an important turning-point. ‘When these transitions occur you have your best opportunity to change the competitive landscape’, adds Mr Wookey.

Yet for customers, the benefits and costs of this next transformation in the underlying computing architecture are still hard to ascertain.

Bruce Richardson, chief research officer at AMR Research, draws attention to the unexpected costs that came with the rise of client-server computing: the soaring hardware and software expenses, the difficulty of supporting such a wide array of machines, and the cost of dealing with security flaws.

‘That ended up being a huge bill’, he notes.

It is hardly surprising that enterprise software companies – those that create the heavy-duty software that big corporations and governments use to run their operations – are so eager to latch on to the next big thing.

An industry still in its infancy is facing potential disruptive upheaval. New licensing models and ways of delivering software, along with open-source approaches to development and distribution, are turning the young software industry on its head.

At the same time, the maturity of existing applications and the technology platform on which they run has left the best-established enterprise software companies stuck in a period of slow growth.

That is fertile soil for extravagant marketing claims to take root in.

Even if SOA risks are being over-hyped, however, it still seems likely to represent an important step forward for today’s often monolithic corporate IT systems.

By harnessing industry-wide technology standards that have been in development since the late 1990s, it promises at least a partial answer to one of the biggest drawbacks of the current computing base: a lack of flexibility that has driven up the cost of software development and forced companies to design their business processes around the needs of their IT systems, rather than the other way around.

Software executives say that the inability to redesign IT systems rapidly to support new business processes, and to link those systems to customers and suppliers, was one of the main reasons for the failure of one of the great early promises of the internet – seamless ‘B2B’, or business-to-business, commerce.

‘It’s what killed the original [B2B] marketplaces’, says Shai Agassi, who heads SAP’s product and technology development.

SAP is certainly further ahead than others in the race to build a more flexible computing platform. While

Oracle and Microsoft are busy trying to create coherent packages of software applications from the corporate acquisitions they have made, SAP is halfway through a revamp of its technology that could give it a lead of two years or more.

‘If they’re right, it will be a huge thing for them’, says Charles Di Bona, software analyst at Sanford C. Bernstein.

Underlying the arrival of SOA has been the spread of so-called web services standards – such as the mark-up language XML and communications protocol SOAP – that make it easier for machines to exchange data automatically.

This holds the promise of automating business processes that run across different IT systems, whether inside a single company or spanning several business partners: a customer placing an order in one system could automatically trigger production requests in another and an invoice in a third.

Breaking down the different steps in a business process in this way, and making them available to be recombined quickly to suit particular business needs, is the ultimate goal of SOA. Each step in the process becomes a service, a single reusable component that is ‘exposed’ through a standard interface.

The smaller each of these software components, the more flexibility users will have to build IT systems that fit their particular needs.

SAP has created 300 services so far; that number will rise to 3,000 by the end of this year, says Mr Agassi. Through NetWeaver, the set of ‘middleware’ tools that provide the glue, it has also finalised much of the platform to deliver this new set of services. The full ‘business process platform’ will be complete by the end of next year, SAP says.

‘The factory is running – we have all the tools ready now’, says Peter Graf, head of solution marketing at SAP. To get customers to start experimenting with the new technology, he adds, ‘we need to come up with killer apps’.

The first full-scale demonstration will come from a project known as Mendecino, under which SAP and Microsoft have been working to integrate their ‘backend’ and ‘front-end’ systems and which is due to be released in the middle of this year.

By linking them to the widely used components of Microsoft’s Office desktop software, SAP’s corporate applications will become easier to use, says Mr Graf: for instance, when a worker enters a holiday in his or her Outlook calendar, it could automatically trigger an approval request to a manager and cross-check with a system that records holiday entitlements.

While such demonstrations may start to show the potential of SOA, however, the real power of this architectural shift is likely to depend on a much broader ecosystem of software developers and corporate users.

‘People want to extend their business processes to get closer to customers’, says Mr Richardson at AMR. To do that through the ‘loosely coupled’ IT systems promised by SOA will require wider adoption of the new technology architecture.

A number of potential drawbacks stand in the way.

Along with uncertainty about the ultimate cost, points out Mr Richardson, is concern about security: what safeguards will companies need before they are willing to let valuable corporate data travel outside their own IT systems, or before they open up their own networks to code developed elsewhere?

A further question is whether SOA can fulfil one of its most important promises: that the technology platforms being created by SAP and others will stimulate a wave of innovation in the software industry, as developers rush to create new and better applications, many of them suited to the specific needs of particular industries or small groups of companies.

That depends partly on whether companies such as SAP can create true technology ‘ecosystems’ around their platforms, much as Microsoft’s success in desktop software depended on its ability to draw developers to its desktop software platform.

‘We were told three years ago that we didn’t know how to partner’, says Mr Agassi at SAP, before dismissing such criticism as ‘quite funny’, given what he says was the success of its earlier software applications in attracting developers. ‘We are more open than we have ever been, we are more standards-based than we have ever been’, he adds – a claim that is contested by Oracle, which has tried to make capital from the fact that its German rival’s underlying technology still depends on a proprietary computing language, ABAP.

However, even if the future SOA-enabled platforms succeed in stimulating a new generation of more flexible corporate software, one other overriding issue remains: rivals such as SAP and Oracle will see little to gain from linking their rival platforms to each other. Full interoperability will remain just a dream.

‘To make SOA real, you have to have a process start in one system and end in another, with no testing or certification needed’, says Mr Barrenechea at Computer Associates – even if those systems are rival ones from SAP and Oracle.

The software giants, he says, ‘have to be motivated to make it work’.

According to Mr Agassi, companies will eventually ‘have to choose’ which of the platforms they want to use as the backbone for their businesses.

The web services standards may create a level of interoperability between these different backbones, but each will still use its own ‘semantics’, or way of defining business information, to make it comprehensible to other, connected systems.

Like a common telephone network, the standards should make it easier to create connections, but they can do nothing if the people on either end of the line are talking a different language.

If different companies in the same industry, or different business partners, adopt different software platforms, there will still be a need for the expensive manual work to link the systems together.

‘You will have to spend the same amount of money on systems integrators that you spend today’, says Mr Graf.

Despite that, the new service-oriented technology should still represent a leap forward from today’s monolithic IT systems. Even the sceptics concede that the gains could be substantial. It should lead to ‘better

[software] components and better interfaces – which equals better inter-operability’, says Mr Barrenechea.

As with any sales pitch from the technology industry, however, it is as well to be wary of the hype.

Source: Richard Waters, New architecture or just new hype? Financial Times, 8 March 2006

4. EDI

Transactional e-commerce predates the World Wide Web and service-oriented architecture by some margin. In the 1960s, electronic data interchange (EDI), financial EDI and electronic funds transfer (EFT) over secure private networks became established modes of intra- and inter-company transaction. In this section, we briefly cover EDI to give a historical context. The idea of standardized document exchange can be traced back to the 1948 Berlin Airlift, where a standard form was required for efficient management of items flown to Berlin from many locations. This was followed by electronic transmission in the 1960s in the US transport industries. The EDIFACT (Electronic Data Interchange for Administration, Commerce and Transport) standard was later produced by a joint United Nations/European committee to enable international trading. There is also a similar X12 EDI standard developed by the ANSI Accredited Standards Committee.

Clarke (1998) considers that EDI is best understood as the replacement of paper-based purchase orders with electronic equivalents, but its applications are wider than this. The types of documents exchanged by EDI include business transactions such as orders, invoices, delivery advice and payment instructions as part of EFT. There may also be pure information transactions such as a product specification, for example engineering drawings or price lists. Clarke (1998) defines EDI as:

the exchange of documents in standardised electronic form, between organisations, in an automated manner, directly from a computer application in one organisation to an application in another.

DTI (2000) describes EDI as follows:

Electronic data interchange (EDI) is the computer-to-computer exchange of structured data, sent in a form that allows for automatic processing with no manual intervention. This is usually carried out over specialist EDI networks.

It is apparent from these definitions that EDI is one form, or a subset of, electronic commerce. A key point is that direct communication occurs between applications (rather than between computers). This requires information systems to achieve the data processing and data management associated with EDI and integration with associated information systems such as sales order processing and inventory control systems.

According to IDC (1999), revenues for EDI network services were already at $1.1 billion in 1999 and forecast to reach over $2 billion by 2003. EDI is developing through new standards and integration with Internet technologies to achieve Internet EDI. IDC (1999) predicted that Internet EDI’s share of EDI revenues would climb from 12 per cent to 41 per cent over the same period.

Internet EDI enables EDI to be implemented at lower costs since, rather than using proprietary, so-called value-added networks (VANs), it uses the same EDI standard documents, but using lower-cost transmission techniques through virtual private networks (VPNs) or the public Internet. Reported cost savings are up to 90 per cent (EDI Insider, 1996). EDI Insider estimated that this cost differential would cause an increase from the 80,000 companies in the United States using EDI in 1996 to hundreds of thousands. Internet EDI also includes EDI- structured documents being exchanged by e-mail or in a more automated form using FTP.

It is apparent that there is now a wide choice of technologies for managing electronic transactions between businesses. The Yankee Group (2002) refers to these as ‘transaction management (TXM)’ technologies which are used to automate machine-to-machine information exchange between organizations. These include:

document and data translation, transformation, routing, process management, Electronic data interchange (EDI), extensible Mark-up Language (XML), Web services … Value- added networks, electronic trading networks, and other hosted solutions are also tracked in the TXM market segment.

Source: Dave Chaffey (2010), E-Business and E-Commerce Management: Strategy, Implementation and Practice, Prentice Hall (4th Edition).

I wanted to thank you for this great read!! I definitely enjoying every little bit of it I have you bookmarked to check out new stuff you post…