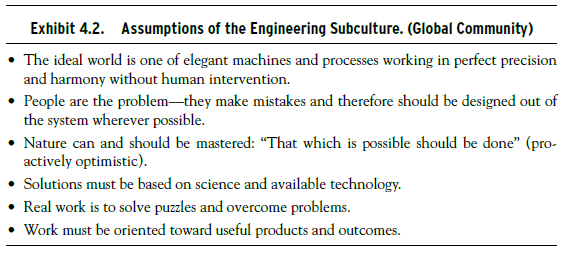

In all organizations, there is a group that represents the basic design elements of the technology underlying the work of the organization, and this group has the knowledge of how that technology is to be used. Within a given organization, they function as a subculture, but what makes this group significant is that their basic assumptions are derived from their occupational community and their education. Though engineer designers work within an organization, their occupational identification is much broader and cuts across nations and industries. In technically based companies, the founders are often engineers in this sense and create an organization that is dominated by these assumptions. DEC was such an organization, and, as we will see later, the domination of the engineering subculture over other business functions is part of the explanation of DEC economic success as well as failure (Schein, 2003; Kunda, 1992). The basic assumptions of the engineering subculture are listed in Exhibit 4.2.

The shared assumption of this subculture are based on common education, work experience, and job requirements. Their education reinforces the view that problems have abstract solutions, and those solutions can, in principle, be implemented in the real world with products and systems that are free of human foibles and errors. Engineers, using this term in the broadest sense, are designers of products and systems that have utility, elegance, permanence, efficiency, safety, and, maybe, as in the case of architecture, even aesthetic appeal, but they are basically designed to require standard responses from their human operators or, ideally, to have no human operators at all.

In the design of complex systems such as jet aircraft or nuclear plants, the engineer prefers a technical routine to insure safety rather than relying on a human team to manage the contingencies that might arise. Engineers recognize the human factor and design for it, but their preference is to make things as automatic as possible because of the basic assumption that it is ultimately humans who make mistakes. Ken Olsen, the founder of DEC, would get furious if someone said there was a “computer error,” pointing out that the machine does not make mistakes, only humans do. Safety is built into the designs themselves. I once asked an Egyptian Airlines pilot whether he preferred the Russian or U.S. planes. He answered immediately that he preferred the U.S. planes and gave as his reason that the Russian planes have only one or two back-up systems, while the U.S. planes have three back-up systems. In a similar vein, I overheard two engineers saying to each other during a landing at the Seattle airport that the cockpit crew was totally unnecessary. The plane could easily be flown and landed by computer.

In other words, one of the key themes in the subculture of engineering is the preoccupation with designing humans out of the systems rather than into them. Recall that the San Francisco Bay Transit Authority known as BART has totally automated trains. In this case, it was not the operators but the customers who objected to this degree of automation, forcing management to put human operators onto each train even though they had nothing to do except to reassure people by their presence. Automation and robotics are increasingly popular because of the lower cost and greater reliability of systems that have no humans in them. But, as pointed out earlier, humans are needed when conditions change and innovative responses are needed.

In Thomas’s study, the engineers were very disappointed that the operations of the elegant machine they were purchasing would be constrained by the presence of more operators than were needed, by a costly retraining program, and by management-imposed policies that had nothing to do with “real engineering” (Thomas, 1994). In my own research on information technology I found that the engineers fundamentally wanted the operators to adjust to the language and characteristics of the particular computer system that was being implemented and were quite impatient with the “resistance to change” that the operators were exhibiting. From the point of view of the users—the operators—not only was the language arcane, but the systems were often not considered useful for solving the operational problems (Schein, 1992).

I have focused on engineers in technical organizations but their equivalent exists in all organizations. In medicine, it would be the doctors who are developing a new surgical technique; in law offices, the designers of computerized systems for creating necessary documents; in the insurance industry, the actuaries and product designers; and in the financial world, the designers of new and sophisticated financial instruments. Their job is not to do the daily work but to design new products, new structures, and new processes to make the organization more effective.

Both the operators and the engineers often find themselves out of alignment with a third critical culture, the culture of executives.

Source: Schein Edgar H. (2010), Organizational Culture and Leadership, Jossey-Bass; 4th edition.

Outstanding post, I believe blog owners should learn a lot from this web site its really user genial.

That is very fascinating, You are a very professional blogger. I have joined your feed and look ahead to in the hunt for more of your magnificent post. Also, I’ve shared your site in my social networks!