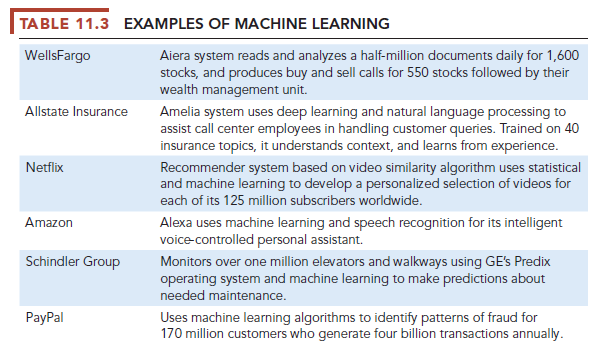

More than 75 percent of AI development today involves some kind of machine learning (ML) accomplished by neural networks, genetic algorithms, and deep learning networks, with the main focus on finding patterns in data, and classifying data inputs into known (and unknown) outputs. Machine learning is based on an entirely different AI paradigm than expert systems. In machine learning there are no experts, and there is no effort to write computer code for rules reflecting an expert’s understanding. Instead, ML begins with very large data sets with tens to hundreds of millions of data points and automatically finds patterns and relationships by analyzing a large set of examples and making a statistical inference. Table 11.3 provides some examples of how leading business firms are using various types of machine learning.

Facebook has over 200 million monthly users in the United States who spend an average of 35 minutes on site daily. The firm displays an estimated 1 billion ads monthly to this audience, and it decides which ads to show each person in less than one second. For each person, Facebook bases this decision on the prior behavior of its users, including information shared (posts, comments, Likes), the activity of their social network friends, background information supplied to Facebook (age, gender, location, devices used), information supplied by advertisers (email address, prior purchases), and user activity on apps and other websites that Facebook can track. Facebook uses ML to identify patterns in the dataset, and to estimate the probability that any specific user will click on a particular ad based on the patterns of behavior they have identified.

Analysts estimate that Facebook uses at least 100,000 servers located in several very large-scale “hyper datacenters” to perform this task. At the end of this process is a simple show ad/no show ad result.

The current response rate (click rate) to Facebook ads is about 0.1 percent, roughly four times that of an untargeted display ad although not as good as targeted email campaigns (about 3 percent), or Google Search ads (about 2 percent). All of the very large Internet consumer firms, including Amazon, Alphabet’s Google, Microsoft, Alibaba, Tencent, Netflix, and Baidu, use similar ML algorithms. Obviously, no human or group of humans could achieve these results given the enormous database size, the speed of transactions, or the complexity of working in real time. The benefits of ML illustrated by this brief example come down to an extraordinary ability to recognize patterns at the scale of millions of people in a matter of seconds, and classify objects into discrete categories.

Supervised and Unsupervised Learning

Nearly all machine learning today involves supervised learning, in which the system is “trained” by providing specific examples of desired inputs and outputs identified by humans in advance. A very large database is developed, say ten million photos posted on the Internet, and then split into two sections, one a development database and the other a test database. Humans select a target, let’s say to identify all photos that contain a car image. Humans feed a large collection of verified pictures that contain a car image into a neural network (described below) that proceeds iteratively through the development database in millions of cycles, until eventually the system can identify photos with a car. The machine learning system is then tested using the test database to ensure the algorithms can achieve the same results with different photos. In many cases, but not all, machine learning can come close to or equal human efforts, but on a very much larger scale. Over time, with tweaking by programmers, and by making the database even bigger, using ever larger computing systems, the system will improve its performance, and in that sense, can learn. Supervised learning is one technique used to develop autonomous vehicles that need to be able to recognize objects around them, such as people, other cars, buildings, and lines on the pavement to guide them (see the chapter-ending case study).

In unsupervised learning, the same procedures are followed, but humans do not feed the system examples. Instead, the system is asked to process the development database and report whatever patterns it finds. For instance, in a seminal research effort often referred to “The Cat Paper,” researchers collected 10 million YouTube photos from videos and built an ML system that could detect human faces without labeling or “teaching” the machine with verified human face photos (Le et al., 2011). Researchers developed a brute force neural network computer system composed of 1,000 machines with 16,000 core processors loaned by Google. The systems processors had a total of 1 billion connections to one another, creating a very large network that imitated on a small scale the neurons and synapses (connections) of a human brain. The result was a system that could detect human faces in photos, as well as cat faces and human bodies. The system was then tested on 22,000 object images on ImageNet (a large online visual database), and achieved a 16 percent accuracy rate. In principle then, it is possible to create machine learning systems that can “teach themselves” about the world without human intervention. But there’s a long way to go: we wouldn’t want to use autonomous cars that were guided by systems with a 16 percent accuracy rate! Nevertheless, this research was a 75 percent improvement over previous efforts.

To put this in perspective, a one-year-old human baby can recognize faces, cats, tables, doors, windows, and hundreds of other objects it has been exposed to, and continuously catalogs new experiences that it seeks out by itself for recognition in the future. But babies have a huge computational advantage over our biggest ML research systems. The human adult brain has an estimated 84 billion neurons, each with over 10,000 connections to other neurons (synapses), and over one trillion total connections in its network (brain). Modern homo sapiens have been programed (by nature) for an estimated 300,000 years, and their predecessors for 2.5 million years. For these reasons, machine learning is applicable today in a very limited number of situations where there are very large databases and computing facilities, most desired outcomes are already defined by humans, the output is binary (0,1), and where there is a very talented and large group of software and system engineers working the problem.

Source: Laudon Kenneth C., Laudon Jane Price (2020), Management Information Systems: Managing the Digital Firm, Pearson; 16th edition.

18 Jun 2021

18 Jun 2021

18 Jun 2021

19 Jun 2021

21 Jun 2021

21 Jun 2021