A neural network is composed of interconnected units called neurons. Each neuron can take data from other neurons, and transfer data to other neurons in the system. The artificial neurons are not biological physical entities as in the human brain, but instead are software programs and mathematical models that perform the input and output function of neurons. The strength of the connections (weight) can be controlled by researchers using a Learning Rule, an algorithm that systematically alters the strength of the connections among the neurons to produce the final desired output that could be identifying a picture of a cancer tumor, fraudulent credit card transactions, or suspicious telephone calling patterns.

Neural networks find patterns and relationships in very large amounts of data that would be too complicated and difficult for a human being to analyze by using machine learning algorithms and computational models that are loosely based on how the biological human brain is thought to operate. Neural networks are pattern detection programs. Neural networks learn patterns from large quantities of data by sifting through the data, and ultimately finding pathways through the network of thousands of neurons. Some pathways are more successful than others in their ability to identify objects like cars, animals, faces, and voices. There may be millions of pathways through the data. An algorithm (the Learning Rule mentioned above) identifies these successful paths, and strengthens the connection among neurons in these pathways. This process is repeated thousands or millions of times until only the most successful pathways are identified. The Learning Rule identifies the best or optimal pathways through the data. At some point, after millions of pathways are analyzed, the process stops when an acceptable level of pattern recognition is reached, for instance, successfully identifying cancer tumors about as well as humans, or even better than humans.

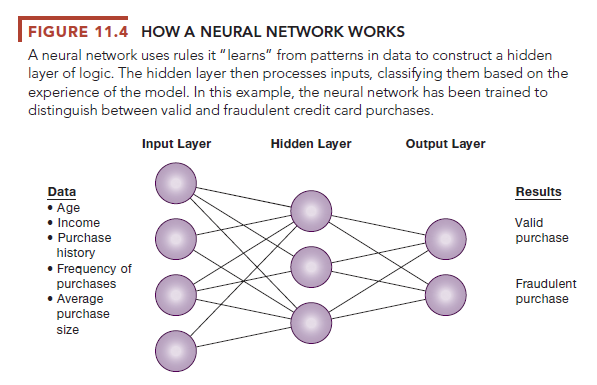

Figure 11.4 represents one type of neural network comprising an input layer, a processing layer, and an output layer. Humans train the network by feeding it a set of outcomes they want the machine to learn. For instance, if the objective is to build a system that can identify patterns in fraudulent credit card purchases, the system is trained using actual examples of fraudulent transactions. The data set may be composed of a million examples of fraudulent transactions. The data set is divided into two segments: a training data set, and a test data set. The training data set is used to train the system. After millions of test runs, the program hopefully will identify the best path through the data. To verify the accuracy of the system, it is then used on the test data set, which the system has not analyzed before. If successful, the system will be tested on new data sets. The neural network in Figure 11.4 has learned how to identify a likely fraudulent credit card purchase.

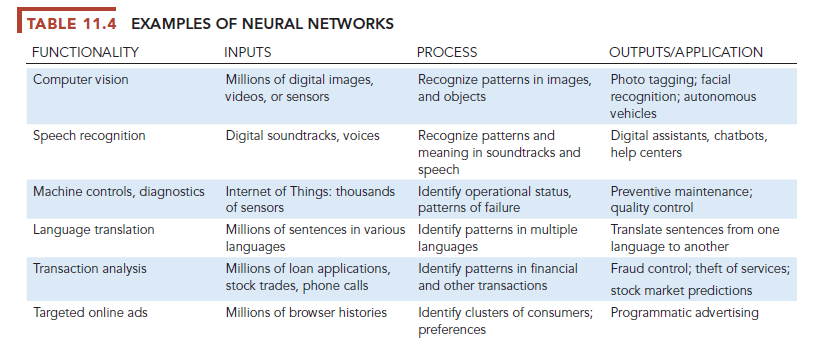

Neural network applications in medicine, science, and business address problems in pattern classification, prediction, and control and optimization. In medicine, neural network applications are used for screening patients for coronary artery disease, for diagnosing epilepsy and Alzheimer’s disease, and for performing pattern recognition of pathology images, including certain cancers. The financial industry uses neural networks to discern patterns in vast pools of data that might help investment firms predict the performance of equities, corporate bond ratings, or corporate bankruptcies. Visa International uses a neural network to help detect credit card fraud by monitoring all Visa transactions for sudden changes in the buying patterns of cardholders. Table 11.4 provides examples of neural networks.

1. “Deep Learning” Neural Networks

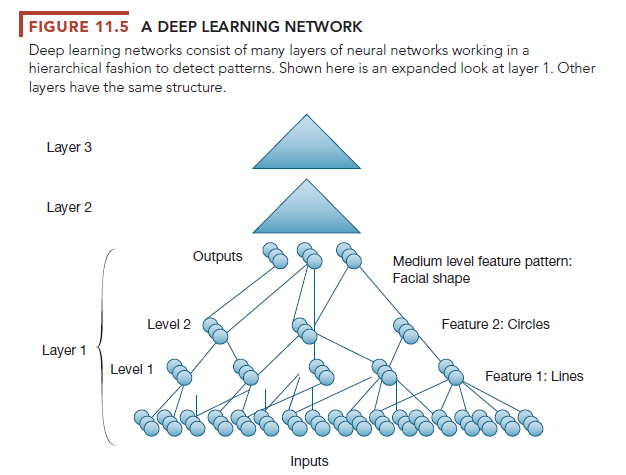

“Deep learning” neural networks are more complex, with many layers of transformation of the input data to produce a target output. Collections of neurons are called nodes or layers. Deep learning networks are in their infancy, and are used almost exclusively for pattern detection on unlabeled data where the system is not told what to look for specifically but to simply discover patterns in the data. The system is expected to be self-taught. See Figure 11.5.

For instance, in our earlier example of unsupervised learning involving a machine learning system that could identify cats (The Cat Paper) and other objects without training, the system used was a deep learning network. It consisted of three layers of neural networks (layers 1, 2, and 3). Each of these layers has two levels of pattern detection (levels 1 and 2). Each level was developed to identify a low-level feature of the photos: layer 1 identified lines in the photos, and layer 2 identified circles. The result of the first layer may be blobs and fuzzy edges. Second and third layers refine the images emerging from the first layer, until at the end of the process the system can distinguish cats, dogs, and humans, although in this case not very well, with a 16 percent accuracy rate.

Many pundits believe deep learning networks come closer to the “Grand Vision” of AI where ML systems would be capable of learning like a human being. Others who work in ML and deep learning are more critical (Marcus, 2018; Pearl 2016).

2. Limitations of Neural Networks and Machine Learning

Neural networks have a number of limitations currently. They require very large data sets to identify patterns. There are often many patterns in large data sets that are nonsensical, and it takes humans to choose which patterns “make sense.” Many patterns in large data sets are ephemeral: there may be a pattern in the stock market, or the performance of professional sports teams, but they do not last long. In many important decision situations there are no large data sets. Should you apply to College A or College B? Should we merge with another company?

Neural networks, machine learning systems, and the people who work with them cannot explain how the system arrived at a particular solution. For instance, in the case of the IBM Watson computer playing Jeopardy, researchers could not say exactly why Watson chose the answers it did, only that they were either right or wrong. Most real-world ML applications in business involve classifying digital objects into simple binary categories (yes or no; 0 or 1). But many of the significant problems facing managers, firms, and organizations do not have binary solutions. Neural networks may not perform well if their training covers too little or too much data. AI systems have no sense of ethics: they may recommend actions that are illegal or immoral. In most current applications, AI systems are best used as tools for relatively low-level decisions, aiding, but not substituting for managers.

Source: Laudon Kenneth C., Laudon Jane Price (2020), Management Information Systems: Managing the Digital Firm, Pearson; 16th edition.

Some genuinely fantastic work on behalf of the owner of this site, dead great subject material.